Simulated Sense of Touch

aurellem ☉

1 Touch

Touch is critical to navigation and spatial reasoning and as such I need a simulated version of it to give to my AI creatures.

Human skin has a wide array of touch sensors, each of which specialize in detecting different vibrational modes and pressures. These sensors can integrate a vast expanse of skin (i.e. your entire palm), or a tiny patch of skin at the tip of your finger. The hairs of the skin help detect objects before they even come into contact with the skin proper.

However, touch in my simulated world can not exactly correspond to human touch because my creatures are made out of completely rigid segments that don't deform like human skin.

Instead of measuring deformation or vibration, I surround each rigid part with a plenitude of hair-like objects (feelers) which do not interact with the physical world. Physical objects can pass through them with no effect. The feelers are able to tell when other objects pass through them, and they constantly report how much of their extent is covered. So even though the creature's body parts do not deform, the feelers create a margin around those body parts which achieves a sense of touch which is a hybrid between a human's sense of deformation and sense from hairs.

Implementing touch in jMonkeyEngine follows a different technical route than vision and hearing. Those two senses piggybacked off jMonkeyEngine's 3D audio and video rendering subsystems. To simulate touch, I use jMonkeyEngine's physics system to execute many small collision detections, one for each feeler. The placement of the feelers is determined by a UV-mapped image which shows where each feeler should be on the 3D surface of the body.

2 Defining Touch Meta-Data in Blender

Each geometry can have a single UV map which describes the position of

the feelers which will constitute its sense of touch. This image path

is stored under the "touch" key. The image itself is black and white,

with black meaning a feeler length of 0 (no feeler is present) and

white meaning a feeler length of scale, which is a float stored

under the key "scale".

(defn tactile-sensor-profile "Return the touch-sensor distribution image in BufferedImage format, or nil if it does not exist." [#^Geometry obj] (if-let [image-path (meta-data obj "touch")] (load-image image-path))) (defn tactile-scale "Return the length of each feeler. Default scale is 0.01 jMonkeyEngine units." [#^Geometry obj] (if-let [scale (meta-data obj "scale")] scale 0.1))

Here is an example of a UV-map which specifies the position of touch sensors along the surface of the upper segment of the worm.

Figure 1: This is the tactile-sensor-profile for the upper segment of the worm. It defines regions of high touch sensitivity (where there are many white pixels) and regions of low sensitivity (where white pixels are sparse).

3 Implementation Summary

To simulate touch there are three conceptual steps. For each solid object in the creature, you first have to get UV image and scale parameter which define the position and length of the feelers. Then, you use the triangles which comprise the mesh and the UV data stored in the mesh to determine the world-space position and orientation of each feeler. Then once every frame, update these positions and orientations to match the current position and orientation of the object, and use physics collision detection to gather tactile data.

Extracting the meta-data has already been described. The third step,

physics collision detection, is handled in touch-kernel.

Translating the positions and orientations of the feelers from the

UV-map to world-space is itself a three-step process.

- Find the triangles which make up the mesh in pixel-space and in

world-space.

trianglespixel-triangles. - Find the coordinates of each feeler in world-space. These are the

origins of the feelers.

feeler-origins. - Calculate the normals of the triangles in world space, and add

them to each of the origins of the feelers. These are the

normalized coordinates of the tips of the feelers.

feeler-tips.

4 Triangle Math

4.1 Shrapnel Conversion Functions

(defn vector3f-seq [#^Vector3f v] [(.getX v) (.getY v) (.getZ v)]) (defn triangle-seq [#^Triangle tri] [(vector3f-seq (.get1 tri)) (vector3f-seq (.get2 tri)) (vector3f-seq (.get3 tri))]) (defn ->vector3f ([coords] (Vector3f. (nth coords 0 0) (nth coords 1 0) (nth coords 2 0)))) (defn ->triangle [points] (apply #(Triangle. %1 %2 %3) (map ->vector3f points)))

It is convenient to treat a Triangle as a vector of vectors, and a

Vector2f or Vector3f as vectors of floats. (->vector3f) and

(->triangle) undo the operations of vector3f-seq and

triangle-seq. If these classes implemented Iterable then seq

would work on them automatically.

4.2 Decomposing a 3D shape into Triangles

The rigid objects which make up a creature have an underlying

Geometry, which is a Mesh plus a Material and other important

data involved with displaying the object.

A Mesh is composed of Triangles, and each Triangle has three

vertices which have coordinates in world space and UV space.

Here, triangles gets all the world-space triangles which comprise a

mesh, while pixel-triangles gets those same triangles expressed in

pixel coordinates (which are UV coordinates scaled to fit the height

and width of the UV image).

(in-ns 'cortex.touch) (defn triangle "Get the triangle specified by triangle-index from the mesh." [#^Geometry geo triangle-index] (triangle-seq (let [scratch (Triangle.)] (.getTriangle (.getMesh geo) triangle-index scratch) scratch))) (defn triangles "Return a sequence of all the Triangles which comprise a given Geometry." [#^Geometry geo] (map (partial triangle geo) (range (.getTriangleCount (.getMesh geo))))) (defn triangle-vertex-indices "Get the triangle vertex indices of a given triangle from a given mesh." [#^Mesh mesh triangle-index] (let [indices (int-array 3)] (.getTriangle mesh triangle-index indices) (vec indices))) (defn vertex-UV-coord "Get the UV-coordinates of the vertex named by vertex-index" [#^Mesh mesh vertex-index] (let [UV-buffer (.getData (.getBuffer mesh VertexBuffer$Type/TexCoord))] [(.get UV-buffer (* vertex-index 2)) (.get UV-buffer (+ 1 (* vertex-index 2)))])) (defn pixel-triangle [#^Geometry geo image index] (let [mesh (.getMesh geo) width (.getWidth image) height (.getHeight image)] (vec (map (fn [[u v]] (vector (* width u) (* height v))) (map (partial vertex-UV-coord mesh) (triangle-vertex-indices mesh index)))))) (defn pixel-triangles "The pixel-space triangles of the Geometry, in the same order as (triangles geo)" [#^Geometry geo image] (let [height (.getHeight image) width (.getWidth image)] (map (partial pixel-triangle geo image) (range (.getTriangleCount (.getMesh geo))))))

4.3 The Affine Transform from one Triangle to Another

pixel-triangles gives us the mesh triangles expressed in pixel

coordinates and triangles gives us the mesh triangles expressed in

world coordinates. The tactile-sensor-profile gives the position of

each feeler in pixel-space. In order to convert pixel-space

coordinates into world-space coordinates we need something that takes

coordinates on the surface of one triangle and gives the corresponding

coordinates on the surface of another triangle.

Triangles are affine, which means any triangle can be transformed into any other by a combination of translation, scaling, and rotation. The affine transformation from one triangle to another is readily computable if the triangle is expressed in terms of a \(4x4\) matrix.

\begin{bmatrix} x_1 & x_2 & x_3 & n_x \\ y_1 & y_2 & y_3 & n_y \\ z_1 & z_2 & z_3 & n_z \\ 1 & 1 & 1 & 1 \end{bmatrix}Here, the first three columns of the matrix are the vertices of the triangle. The last column is the right-handed unit normal of the triangle.

With two triangles \(T_{1}\) and \(T_{2}\) each expressed as a matrix like above, the affine transform from \(T_{1}\) to \(T_{2}\) is

\(T_{2}T_{1}^{-1}\)

The clojure code below recapitulates the formulas above, using

jMonkeyEngine's Matrix4f objects, which can describe any affine

transformation.

(in-ns 'cortex.touch) (defn triangle->matrix4f "Converts the triangle into a 4x4 matrix: The first three columns contain the vertices of the triangle; the last contains the unit normal of the triangle. The bottom row is filled with 1s." [#^Triangle t] (let [mat (Matrix4f.) [vert-1 vert-2 vert-3] (mapv #(.get t %) (range 3)) unit-normal (do (.calculateNormal t)(.getNormal t)) vertices [vert-1 vert-2 vert-3 unit-normal]] (dorun (for [row (range 4) col (range 3)] (do (.set mat col row (.get (vertices row) col)) (.set mat 3 row 1)))) mat)) (defn triangles->affine-transform "Returns the affine transformation that converts each vertex in the first triangle into the corresponding vertex in the second triangle." [#^Triangle tri-1 #^Triangle tri-2] (.mult (triangle->matrix4f tri-2) (.invert (triangle->matrix4f tri-1))))

4.4 Triangle Boundaries

For efficiency's sake I will divide the tactile-profile image into

small squares which inscribe each pixel-triangle, then extract the

points which lie inside the triangle and map them to 3D-space using

triangle-transform above. To do this I need a function,

convex-bounds which finds the smallest box which inscribes a 2D

triangle.

inside-triangle? determines whether a point is inside a triangle

in 2D pixel-space.

(defn convex-bounds "Returns the smallest square containing the given vertices, as a vector of integers [left top width height]." [verts] (let [xs (map first verts) ys (map second verts) x0 (Math/floor (apply min xs)) y0 (Math/floor (apply min ys)) x1 (Math/ceil (apply max xs)) y1 (Math/ceil (apply max ys))] [x0 y0 (- x1 x0) (- y1 y0)])) (defn same-side? "Given the points p1 and p2 and the reference point ref, is point p on the same side of the line that goes through p1 and p2 as ref is?" [p1 p2 ref p] (<= 0 (.dot (.cross (.subtract p2 p1) (.subtract p p1)) (.cross (.subtract p2 p1) (.subtract ref p1))))) (defn inside-triangle? "Is the point inside the triangle?" {:author "Dylan Holmes"} [#^Triangle tri #^Vector3f p] (let [[vert-1 vert-2 vert-3] [(.get1 tri) (.get2 tri) (.get3 tri)]] (and (same-side? vert-1 vert-2 vert-3 p) (same-side? vert-2 vert-3 vert-1 p) (same-side? vert-3 vert-1 vert-2 p))))

5 Feeler Coordinates

The triangle-related functions above make short work of calculating the positions and orientations of each feeler in world-space.

(in-ns 'cortex.touch) (defn feeler-pixel-coords "Returns the coordinates of the feelers in pixel space in lists, one list for each triangle, ordered in the same way as (triangles) and (pixel-triangles)." [#^Geometry geo image] (map (fn [pixel-triangle] (filter (fn [coord] (inside-triangle? (->triangle pixel-triangle) (->vector3f coord))) (white-coordinates image (convex-bounds pixel-triangle)))) (pixel-triangles geo image))) (defn feeler-world-coords "Returns the coordinates of the feelers in world space in lists, one list for each triangle, ordered in the same way as (triangles) and (pixel-triangles)." [#^Geometry geo image] (let [transforms (map #(triangles->affine-transform (->triangle %1) (->triangle %2)) (pixel-triangles geo image) (triangles geo))] (map (fn [transform coords] (map #(.mult transform (->vector3f %)) coords)) transforms (feeler-pixel-coords geo image)))) (defn feeler-origins "The world space coordinates of the root of each feeler." [#^Geometry geo image] (reduce concat (feeler-world-coords geo image))) (defn feeler-tips "The world space coordinates of the tip of each feeler." [#^Geometry geo image] (let [world-coords (feeler-world-coords geo image) normals (map (fn [triangle] (.calculateNormal triangle) (.clone (.getNormal triangle))) (map ->triangle (triangles geo)))] (mapcat (fn [origins normal] (map #(.add % normal) origins)) world-coords normals))) (defn touch-topology "touch-topology? is not a function." [#^Geometry geo image] (collapse (reduce concat (feeler-pixel-coords geo image))))

6 Simulated Touch

touch-kernel generates functions to be called from within a

simulation that perform the necessary physics collisions to collect

tactile data, and touch! recursively applies it to every node in

the creature.

(in-ns 'cortex.touch) (defn set-ray [#^Ray ray #^Matrix4f transform #^Vector3f origin #^Vector3f tip] ;; Doing everything locally reduces garbage collection by enough to ;; be worth it. (.mult transform origin (.getOrigin ray)) (.mult transform tip (.getDirection ray)) (.subtractLocal (.getDirection ray) (.getOrigin ray)) (.normalizeLocal (.getDirection ray))) (import com.jme3.math.FastMath) (defn touch-kernel "Constructs a function which will return tactile sensory data from 'geo when called from inside a running simulation" [#^Geometry geo] (if-let [profile (tactile-sensor-profile geo)] (let [ray-reference-origins (feeler-origins geo profile) ray-reference-tips (feeler-tips geo profile) ray-length (tactile-scale geo) current-rays (map (fn [_] (Ray.)) ray-reference-origins) topology (touch-topology geo profile) correction (float (* ray-length -0.2))] ;; slight tolerance for very close collisions. (dorun (map (fn [origin tip] (.addLocal origin (.mult (.subtract tip origin) correction))) ray-reference-origins ray-reference-tips)) (dorun (map #(.setLimit % ray-length) current-rays)) (fn [node] (let [transform (.getWorldMatrix geo)] (dorun (map (fn [ray ref-origin ref-tip] (set-ray ray transform ref-origin ref-tip)) current-rays ray-reference-origins ray-reference-tips)) (vector topology (vec (for [ray current-rays] (do (let [results (CollisionResults.)] (.collideWith node ray results) (let [touch-objects (filter #(not (= geo (.getGeometry %))) results) limit (.getLimit ray)] [(if (empty? touch-objects) limit (let [response (apply min (map #(.getDistance %) touch-objects))] (FastMath/clamp (float (if (> response limit) (float 0.0) (+ response correction))) (float 0.0) limit))) limit]))))))))))) (defn touch! "Endow the creature with the sense of touch. Returns a sequence of functions, one for each body part with a tactile-sensor-profile, each of which when called returns sensory data for that body part." [#^Node creature] (filter (comp not nil?) (map touch-kernel (filter #(isa? (class %) Geometry) (node-seq creature)))))

7 Visualizing Touch

Each feeler is represented in the image as a single pixel. The greyscale value of each pixel represents how deep the feeler represented by that pixel is inside another object. Black means that nothing is touching the feeler, while white means that the feeler is completely inside another object, which is presumably flush with the surface of the triangle from which the feeler originates.

(in-ns 'cortex.touch) (defn touch->gray "Convert a pair of [distance, max-distance] into a gray-scale pixel." [distance max-distance] (gray (- 255 (rem (int (* 255 (/ distance max-distance))) 256)))) (defn view-touch "Creates a function which accepts a list of touch sensor-data and displays each element to the screen." [] (view-sense (fn [[coords sensor-data]] (let [image (points->image coords)] (dorun (for [i (range (count coords))] (.setRGB image ((coords i) 0) ((coords i) 1) (apply touch->gray (sensor-data i))))) image))))

8 Basic Test of Touch

The worm's sense of touch is a bit complicated, so for this basic test I'll use a new creature — a simple cube which has touch sensors evenly distributed along each of its sides.

(in-ns 'cortex.test.touch) (defn touch-cube [] (load-blender-model "Models/test-touch/touch-cube.blend"))

8.1 The Touch Cube

YouTube

A simple creature with evenly distributed touch sensors.

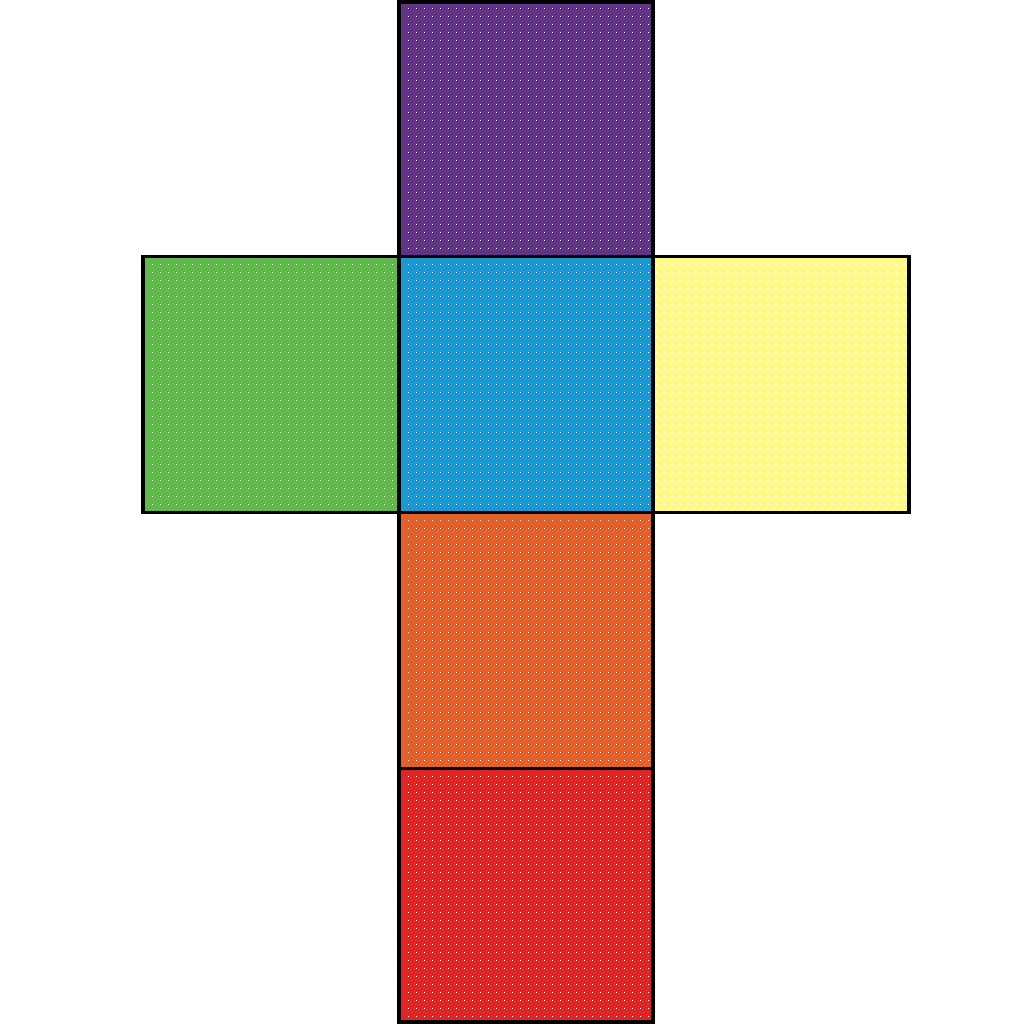

The tactile-sensor-profile image for this simple creature looks like this:

Figure 2: The distribution of feelers along the touch-cube. The colors of the faces are irrelevant; only the white pixels specify feelers.

(in-ns 'cortex.test.touch) (defn test-basic-touch "Testing touch: You should see a cube fall onto a table. There is a cross-shaped display which reports the cube's sensation of touch. This display should change when the cube hits the table, and whenever you hit the cube with balls. Keys: <space> : fire ball" ([] (test-basic-touch false)) ([record?] (let [the-cube (doto (touch-cube) (body!)) touch (touch! the-cube) touch-display (view-touch)] (world (nodify [the-cube (box 10 1 10 :position (Vector3f. 0 -10 0) :color ColorRGBA/Gray :mass 0)]) standard-debug-controls (fn [world] (let [timer (IsoTimer. 60)] (.setTimer world timer) (display-dilated-time world timer)) (if record? (Capture/captureVideo world (File. "/home/r/proj/cortex/render/touch-cube/main-view/"))) (speed-up world) (light-up-everything world)) (fn [world tpf] (touch-display (map #(% (.getRootNode world)) touch) (if record? (File. "/home/r/proj/cortex/render/touch-cube/touch/"))))))))

8.2 Basic Touch Demonstration

YouTube

The simple creature responds to touch.

8.3 Generating the Basic Touch Video

(ns cortex.video.magick4 (:import java.io.File) (:use clojure.java.shell)) (defn images [path] (sort (rest (file-seq (File. path))))) (def base "/home/r/proj/cortex/render/touch-cube/") (defn pics [file] (images (str base file))) (defn combine-images [] (let [main-view (pics "main-view") touch (pics "touch/0") background (repeat 9001 (File. (str base "background.png"))) targets (map #(File. (str base "out/" (format "%07d.png" %))) (range 0 (count main-view)))] (dorun (pmap (comp (fn [[background main-view touch target]] (println target) (sh "convert" touch "-resize" "x300" "-rotate" "180" background "-swap" "0,1" "-geometry" "+776+129" "-composite" main-view "-geometry" "+66+21" "-composite" target)) (fn [& args] (map #(.getCanonicalPath %) args))) background main-view touch targets))))

cd ~/proj/cortex/render/touch-cube/

ffmpeg -r 60 -i out/%07d.png -b:v 9000k -c:v libtheora basic-touch.ogg

cd ~/proj/cortex/render/touch-cube/

ffmpeg -r 30 -i blender-intro/%07d.png -b:v 9000k -c:v libtheora touch-cube.ogg

9 Adding Touch to the Worm

(in-ns 'cortex.test.touch) (defn test-worm-touch "Testing touch: You will see the worm fall onto a table. There is a display which reports the worm's sense of touch. It should change when the worm hits the table and when you hit it with balls. Keys: <space> : fire ball" ([] (test-worm-touch false)) ([record?] (let [the-worm (doto (worm) (body!)) touch (touch! the-worm) touch-display (view-touch)] (world (nodify [the-worm (floor)]) standard-debug-controls (fn [world] (let [timer (IsoTimer. 60)] (.setTimer world timer) (display-dilated-time world timer)) (if record? (Capture/captureVideo world (File. "/home/r/proj/cortex/render/worm-touch/main-view/"))) (speed-up world) (light-up-everything world)) (fn [world tpf] (touch-display (map #(% (.getRootNode world)) touch) (if record? (File. "/home/r/proj/cortex/render/worm-touch/touch/"))))))))

9.1 Worm Touch Demonstration

YouTube

The worm responds to touch.

9.2 Generating the Worm Touch Video

(ns cortex.video.magick5 (:import java.io.File) (:use clojure.java.shell)) (defn images [path] (sort (rest (file-seq (File. path))))) (def base "/home/r/proj/cortex/render/worm-touch/") (defn pics [file] (images (str base file))) (defn combine-images [] (let [main-view (pics "main-view") touch (pics "touch/0") targets (map #(File. (str base "out/" (format "%07d.png" %))) (range 0 (count main-view)))] (dorun (pmap (comp (fn [[ main-view touch target]] (println target) (sh "convert" main-view touch "-geometry" "+0+0" "-composite" target)) (fn [& args] (map #(.getCanonicalPath %) args))) main-view touch targets))))

cd ~/proj/cortex/render/worm-touch

ffmpeg -r 60 -i out/%07d.png -b:v 9000k -c:v libtheora worm-touch.ogg

10 Headers

(ns cortex.touch "Simulate the sense of touch in jMonkeyEngine3. Enables any Geometry to be outfitted with touch sensors with density determined by a UV image. In this way a Geometry can know what parts of itself are touching nearby objects. Reads specially prepared blender files to construct this sense automatically." {:author "Robert McIntyre"} (:use (cortex world util sense)) (:import (com.jme3.scene Geometry Node Mesh)) (:import com.jme3.collision.CollisionResults) (:import com.jme3.scene.VertexBuffer$Type) (:import (com.jme3.math Triangle Vector3f Vector2f Ray Matrix4f)))

(ns cortex.test.touch (:use (cortex world util sense body touch)) (:use cortex.test.body) (:import (com.aurellem.capture Capture IsoTimer)) (:import java.io.File) (:import (com.jme3.math Vector3f ColorRGBA)))

11 Source Listing

12 Next

So far I've implemented simulated Vision, Hearing, and Touch, the most obvious and prominent senses that humans have. Smell and Taste shall remain unimplemented for now. This accounts for the "five senses" that feature so prominently in our lives. But humans have far more than the five main senses. There are internal chemical senses, pain (which is not the same as touch), heat sensitivity, and our sense of balance, among others. One extra sense is so important that I must implement it to have a hope of making creatures that can gracefully control their own bodies. It is Proprioception, which is the sense of the location of each body part in relation to the other body parts.

Close your eyes, and touch your nose with your right index finger. How did you do it? You could not see your hand, and neither your hand nor your nose could use the sense of touch to guide the path of your hand. There are no sound cues, and Taste and Smell certainly don't provide any help. You know where your hand is without your other senses because of Proprioception.

Onward to proprioception!